4.4. Finding Eigenvalues and Eigenvectors¶

We will now discuss the mathematical relationships between a matrix and its eigenvalues and eigenvectors. The relationships lead to a simple strategy for calculating the eigenvalues and eigenvectors. Unfortunately, the simple approach is insufficient for matrices larger than \(2{\times}2\) because finding the eigenvalues requires factoring a polynomial. The quadratic equation is all that we need for a \(2{\times}2\) matrix; however, we don’t have good factoring procedures for larger polynomials. Still, the relationships and equations presented here are foundational for applying eigenvalues and eigenvectors to engineering problems and to the iterative algorithms used to find the eigenvalues and eigenvectors of a larger matrix.

Note

We present a simple iterative procedure in The Power Method that finds the largest and most important eigenvalue. Finding Eigenvectors describes how to find the eigenvector paired to a known eigenvalue. The more challenging task of computing all of the eigenvalues and eigenvectors of a matrix is referred to as the eigenvalue problem. Although eigenvalues were first discovered in the eighteenth century, the first successful solution to the eigenvalue problem was not developed until 1959 [UHLIG09]. Some of the significant milestones in the search for a solution to the eigenvalue problem are discussed in History of the Eigenvalue Problem. Orthogonal Matrix Factoring presents the first two iterative algorithms developed to solve the eigenvalue problem.

We will begin with the equation for eigenvectors and eigenvalues, and insert an identity matrix so that both sides have a matrix multiplied by a vector.

Then we rearrange the equation to find the characteristic eigenvalue equation.

We are not interested in the trivial solution to equation (4.1) where \(\bm{x} = \bm{0}\). Eigenvectors are defined to be nonzero vectors. The solution to the characteristic eigenvalue equation only exists when the columns of the matrix \(\mathbf{A} - \lambda\,\bf{I}\) form a linear combination with \(\bm{x}\) yielding the zero vector. The linear dependence of the columns of the characteristic equation means that it is singular, having a zero determinant.

4.4.1. Finding Eigenvalues¶

The \(n\) scalar eigenvalues, \(\{\lambda_1, \lambda_2, \ldots, \lambda_n\}\), can be viewed as the shift of the matrix’s main diagonal that will make the characteristic matrix singular. The Eigenvalues are found by subtracting \(\lambda\) along the diagonal and finding the set of \(\lambda\)s for which the determinant is zero.

Note

Since an eigenvalue shifts the matrix’s diagonal such that the matrix is singular, a singular matrix has a zero-valued eigenvalue for each dependent row or column. Diagonalization could be used to find the rank of a matrix, but the SVD calculation is faster Rank from the SVD).

The determinant yields a degree-\(n\) polynomial, which can be factored to find the eigenvalue roots.

For the generalized \(2{\times}2\) matrix, the coefficient of the \(\lambda\) term in the quadratic equation is the negative of the sum of the matrix diagonal (the trace), and the constant term is the determinant of the matrix.

The quadratic equation simplifies this result for \(2{\times}2\) matrices. We will define variables \(m\) as the mean of the diagonal elements and \(p\) as the determinant of the matrix. Then we have a simple equation for the two eigenvalues.

In [1]: import numpy as np

In [2]: from scipy.linalg import eig

In [3]: A = np.array([[-6, -1], [-8, -4]], dtype=float); print(A)

[[-6. -1.]

[-8. -4.]]

In [4]: L, X = eig(A)

In [5]: L

Out[5]: array([-8.+0.j, -2.+0.j])

In [6]: X

Out[6]:

array([[-0.4472, 0.2425],

[-0.8944, -0.9701]])

In [7]: from math import sqrt

In [8]: m = -5

In [9]: p = 24 - 8 # p = 16

In [10]: l1 = m - sqrt(m**2 - p); print(l1)

-8.0

In [11]: l2 = m + sqrt(25 - 16); print(l2)

-2.0

There are two problems with finding the eigenvalues of matrices larger than \(2{\times}2\) using this procedure. First, calculating the determinant of a large matrix is slow. Secondly, we do not have acceptable methods for factoring larger polynomials. There are closed-form equations for degree-3 and degree-4 polynomials. However, unlike the quadratic equation, those equations are a mess—long and complicated. The polynomial factoring problem has confounded mathematicians for centuries. In 1823, Niels Henrik Abel proved that a direct algorithm cannot find the roots of a polynomial of degree greater than four [HADLOCK78]. Iterative algorithms are required to factor a polynomial of degree-5 or more, or to find the eigenvalues of a matrix larger than \(4{\times}4\).

In practice, iterative algorithms are used to find the eigenvalues of matrices larger than \(2{\times}2\). Orthogonal Matrix Factoring presents iterative algorithms for finding the full eigendecomposition, but the iterative power method may be used to find the largest eigenvalue.

4.4.2. Roots of a Polynomial by Eigenvalues¶

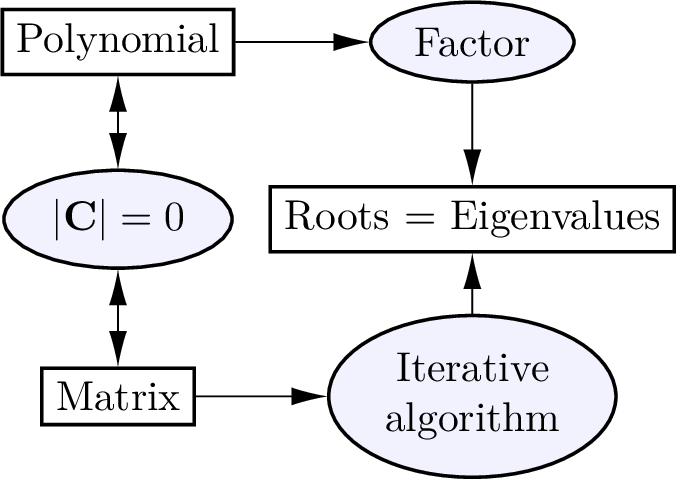

As illustrated in figure Fig. 4.2, an algorithm to find the eigenvalues of the matrix provides an iterative algorithm for factoring a polynomial. One should factor a polynomial by expressing it as a matrix, and then the polynomial’s roots will be the matrix’s eigenvalues.

Fig. 4.2 The roots of a polynomial and the eigenvalues of a corresponding matrix are the same. It is faster and simpler to find the eigenvalues using an iterative algorithm rather than to factor a polynomial.¶

Let \(n\) be the degree of the polynomial, and let the row vector \(p\) hold the polynomial coefficients. Then, build a shifted identity matrix and use the coefficients in the first row. The Python commands below use the algorithm to find the roots of the polynomial \(f(x) = x^3 - 4x^2 + x + 6 = 0\).

# n = degree of the polynomial coefficients

In [34]: n = 3

In [35]: A = np.eye(n, k=-1)

In [36]: p = np.array([[ 1, -4, 1, 6]])

In [37]: A[[0], :] = -p[[0],1:]/p[0, 0]

In [38]: print(A)

[[ 4. -1. -6.]

[ 1. 0. 0.]

[ 0. 1. 0.]]

In [39]: r = eigvals(A); print(r)

[ 3.+0.j 2.+0.j -1.+0.j]

The determinant of the characteristic equation of \(\bf{A}\) has the same coefficients as \(f(x)\), thus the eigenvalues of \(\m{A}\) are the roots of \(f(x)\).

The argument passed to the roots function is a row vector containing

the coefficients of the polynomial.

In [46]: r = np.roots(np.array([1, -4, 1, 6])); print(r)

[ 3. 2. -1.]

The poly function is the inverse of roots:

In [47]: np.poly(r)

Out[47]: array([ 1., -4., 1., 6.])

4.4.3. Finding Eigenvectors¶

The eigenvectors, \(\bm{x}_i\) (one per eigenvalue), come from the null solution to the characteristic equation.

The eigenvectors can be found with elimination or with the null

function, which uses the SVD Null Space).

We will use elimination here to find the eigenvectors.

Add \(-1/2\) of row 1 to row 2 and then divide row 1 by \(4\):

The second row of zeros occurs because it is a singular matrix. So, we have a free variable, and can set one variable to any desired value (\(x_{1a} = 1\)).

Add \(2\) of row 1 to row 2 and then divide row 1 by \(-1\):

Note that the null_space and eig functions from scipy.linalg

normalize the eigenvector so that its length is one. The following example

shows that eigenvectors may be multiplied by a scalar to match the manual

calculations.

In [13]: from scipy.linalg import null_space

In [14]: A = np.array([[2, 2], [2, -1]])

# the eigenvalues

In [15]: l1 = -2; l2 = 3

In [16]: C1 = A - l1*np.eye(2); print(C1)

[[4. 2.]

[2. 1.]]

# normalized eigenvector

In [17]: x1 = null_space(C1); print(x1)

[[ 0.4472]

[-0.8944]]

# scaled eigenvector

In [18]: x1 = x1/x1[0]; print(x1)

[[ 1.]

[-2.]]

In [19]: C2 = A - l2*np.eye(2); print(C2)

[[-1. 2.]

[ 2. -4.]]

# normalized eigenvector

In [20]: x2 = null_space(C2); print(x2)

[[-0.8944]

[-0.4472]]

# scaled eigenvector

In [21]: x2 = x2/x2[1]; print(x2)

[[2.]

[1.]]